SlovakBERT, the first public Slovak neural language model

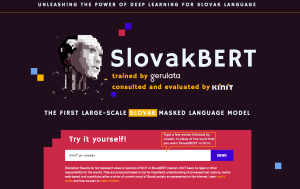

KInIT and Gerulata Technologies introduced SlovakBERT, a new language model for Slovak, which will help improve the automatic processing of texts written in Slovak.

Such models were initially created mainly for English and subsequently for widely used languages, such as Chinese or French. Models for smaller languages such as Czech and Polish occurred later. Even multilingual models are available nowadays.

The model, trained by our partner, Gerulata Technologies, was consulted and scientifically evaluated by our NLP team. SlovakBERT learned Slovak from about 20 GB of Slovak text collected from the Web. These data are a snapshot of what Slovak language looks like for the model.

Try it out!

You can explore more about SlovakBERT and experiment how it works on. Just visit the dedicated website and try it out for yourself.

Training SlovakBERT required almost two weeks of calculations on a powerful computational server. By comparison, a computer with a mid-range graphics card might take years to finish the computations, a regular work laptop might take perhaps decades. SlovakBERT is now open to the world and accessible1 to the NLP community. We believe that this step will improve the level of automated Slovak language processing for researchers, companies, but also for the general public.

We described the results of the experimentation in the publicly available article SlovakBERT: Slovak Masked Language Model2. The model proved to be so good that we are already involving it in projects with our partners from industry and it might soon appear in the first deployed applications, for example in the upcoming system for analysing sentiment of customer communication on public social network profiles.

1 SlovakBERT at GitHUB

2 Matúš Pikuliak, Štefan Grivalský, Martin Konôpka, Miroslav Blšták, Martin Tamajka, Viktor Bachratý, Marián Šimko, Pavol Balážik, Michal Trnka, Filip Uhlárik. 2021. SlovakBERT: Slovak Masked Language Model

This research was realized thanks to the support of the Ministry of Education, Science, Research and Sports of the Slovak Republic.