Čo je

What do children see on TikTok?

What happens to the For You Page of a 16-year-old girl interested in beauty content after ten days on TikTok? Does it look different from an adult’s? Is it just entertainment, or is something else going on and should we care?

Today, a teenager on social media is exposed to a fundamentally different stream of content than we were even ten years ago. When you opened Facebook back then, you saw what your friends posted. When you’d seen everything, you were done. Now, when you open TikTok or Instagram, it’s not always clear which content comes from people you follow and which has been served to you by an algorithm and there is no end to it. You can scroll forever. Nothing runs out.

We ran an experiment to find out what this means for a minor. The short answer: yes, minors on TikTok are being profiled through ads, just not the kind you might be thinking about.

This matters most when it comes to advertising. When Gen Z or millennials think of ads, the mental image is probably a TV commercial break – your show gets interrupted, you sit through it, everyone watching sees the same thing, and you know perfectly well it’s an ad. Now think about what advertising looks like on TikTok. Sometimes it’s obvious. But often it’s woven seamlessly into entertainment, and the line between an organic video and deliberate commercial placement becomes genuinely difficult to locate. And then there are those ads that feel almost uncanny – I was literally just thinking about this. This is not a coincidence. That’s the platform using everything it has learned about you.

Adults have cognitive tools to push back against this, at least some of the time. Children and teenagers do not. Neurological research shows us that during adolescence, the brain regions associated with reward and social salience (the ventral striatum, the amygdala, the insula) become hyperactive, while the systems governing self-regulation develop much later. Teenagers are therefore more drawn to stimulation, more sensitive to risk, and more responsive to social signals than adults. Social media is architecturally designed to feed exactly this, notifications, likes, comments, follower counts, each one serving as a small dopamine hit, each one carrying the particular weight of peer approval. Think about how much your friends‘ opinions mattered to you at fifteen compared to your parents‘ (you definitely preferred your best friend’s advice on what to wear to prom over what your mom thought suited you better). Now imagine that feedback loop running constantly, through a device in your pocket.

Advertising lands differently in this context. Resisting an ad requires two things: recognizing that it is an ad, and recognizing that it is trying to change your behavior. Adults can usually do both. Children can often manage the first, but rarely the second. They process advertising through emotional rather than analytical pathways, so where adults feel skepticism, teenagers are more likely to feel a positive effect. This becomes especially acute with influencer content. Influencers function as quasi-peers, and teenagers develop what researchers call para-social relationships with them (that felt sense of a real personal bond with someone they’ve never met). Even when an advertisement disclosure label exists, a teenager with a strong para-social connection to a creator doesn’t activate skepticism. The relationship overrides it.

This is why the EU’s Digital Services Act bans platforms from profiling minors for personalized advertising. So we asked: does TikTok actually comply?

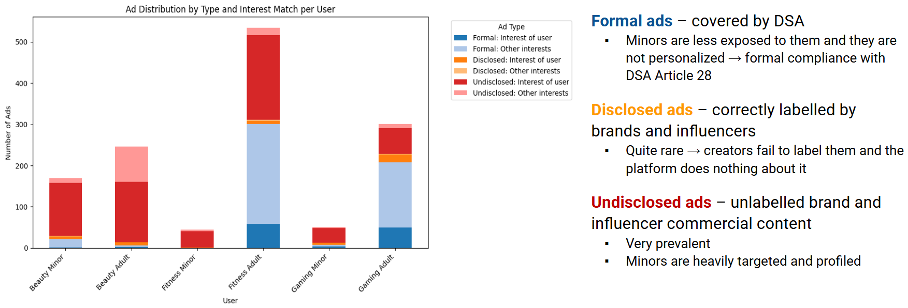

To answer that, we built pairs of simulated accounts, one minor, aged 16 or 17, one adult, aged 20 or 21, gave them identical interest profiles (beauty, fitness, gaming), and ran them on TikTok simultaneously for ten days in December 2025. Same interests, same location, same gender, same time of day. The only difference was age. We classified everything the platform served them into four categories: formal ads, disclosed commercial content, undisclosed commercial content, and non-commercial content.

Before we get to what we found, one thing needs to be explained, because it is the key to understanding why the findings matter. Not all advertising on TikTok is legally considered advertising. The DSA’s ban on profiling minors applies only to what we call a formal advertisement – content that a brand or company pays TikTok directly to distribute. That „Sponsored“ or “Ad” label you occasionally see at the bottom of a video? That’s a formal ad. The ban covers it.

But think about how much of what you actually see on TikTok looks like that. Most commercial content comes from somewhere else entirely: an influencer showing you her skincare routine because a brand paid her, not TikTok; a company posting on its own account to promote its products; a creator mentioning a discount code in a video that looks, feels, and functions exactly like any other video. None of this is legally an advertisement under the DSA. The platform doesn’t get paid for its distribution, so the ban doesn’t apply, regardless of how commercial the content is, regardless of how precisely it was targeted, regardless of what it does to the person watching it.

Here is what we found inside that gap.

The good news first: on formal advertising (the blue bar), TikTok behaves as the law requires. Minors in our study received far fewer formal ads than adults – in the fitness category, for example, the adult account received 301 formal ads over ten days; the minor received zero. And those that did reach minors showed no meaningful interest-based targeting. The law is visible here, and where the law is visible, TikTok complies.

In the chart above, dark-colored bars show ads that matched the user’s interest; light bars show ads on unrelated topics. The pattern for minors is hard to miss. Have a look at the red bars. Out of all the commercial content our minor accounts received, 84% was undisclosed (the red bar) – no label, no „paid partnership“ tag, nothing. Just a video promoting a product without saying so. For adults, that figure was around 51%. So not only are minors receiving a higher proportion of hidden commercial content than adults, but that content is the dominant form of advertising in their feeds. The advertising the DSA was designed to regulate is almost absent from what minors see. The advertising the DSA cannot see is almost everything.

And then there is the question of personalization, which is where the numbers become genuinely striking. Go back to that 16-year-old interested in beauty. Over ten days, 92% of the commercial content she received was beauty-related. Not 92% of her whole feed, 92% of every video with a commercial purpose that the algorithm chose to show her was matched precisely to her stated interest. Skincare, cosmetics, appearance products. The algorithm knew what she was interested in and it used that knowledge to target her with commercial content at a rate that the law explicitly forbids for formal advertising and explicitly does not address for anything else.

To put that in perspective: the profiling effects we measured for undisclosed commercial content reaching minors were five to eight times stronger than the profiling effects for formal advertising reaching adults. The category the law permits to be personalized (formal ads) shown to adults was personalized modestly. The category the law says nothing about (undisclosed commercial content) shown to minors was personalized aggressively. The algorithm did not accidentally land here. It applied its full targeting logic to the one category of commercial content it has no legal obligation to constrain.

I want to be clear about what this evidence is not. It is not an argument for banning social media for children, and I would push back strongly against anyone who reaches for that conclusion. Banning is a tidy answer to a messy problem, and it solves almost nothing. There is no meaningful neurological threshold at 16 that would justify a cutoff, in fact the brain development we are talking about is gradual, not a switch that flips on a birthday. A ban would not make teenagers stop using these platforms; it would push them toward darker, less regulated corners of the internet, where there are even fewer protections and no commercial incentive whatsoever to build any. It would let platforms off the hook entirely because why invest in making an environment safer for an audience that is officially not supposed to be there? And perhaps most importantly, it ignores what young people themselves tell us: they know these platforms are affecting them. They are not unaware. What they lack is not insight but the cognitive infrastructure to defend against systems that were specifically engineered to outpace it. Closing the door is not the same as making what is behind it safe.

The fix is more specific than a ban. We believe that the definition of advertising needs to be based on commercial purpose, not payment structure, meaning if content is designed to promote a product and delivered through algorithmic targeting, it should carry the same protections regardless of whether TikTok was paid for its distribution. Platforms need to be responsible for detecting missing labels and adding them if missing, not just providing a toggle that creators often ignore. At the same time, creators should also be held responsible for repeatedly not disclosing the commercial intent of their videos. And the ban on profiling children needs to apply to all commercial content, not just what passes through a formal ad system.

The evidence is there. The methodology to verify compliance is there. The legal precedent for broader definitions of advertising exists elsewhere in EU law. What remains is the decision to align them and to take seriously the principle that children should be able to exist in digital spaces without being commercially exploited in ways they are neurologically unable to see coming.

Interested in more details? Take a look at our recent paper (preprint version) accepted to the FAccT 2026 conference or the entire project AI-Auditology under which this took place.